How SOC 2 Automation Vendors Compete for High-Intent Search Demand

If you market a SOC 2 automation platform, high-intent search demand is already in motion. Buyers aren’t opening Google to “learn SOC 2.” They’re trying to decide which vendor belongs on the shortlist and which ones don’t.

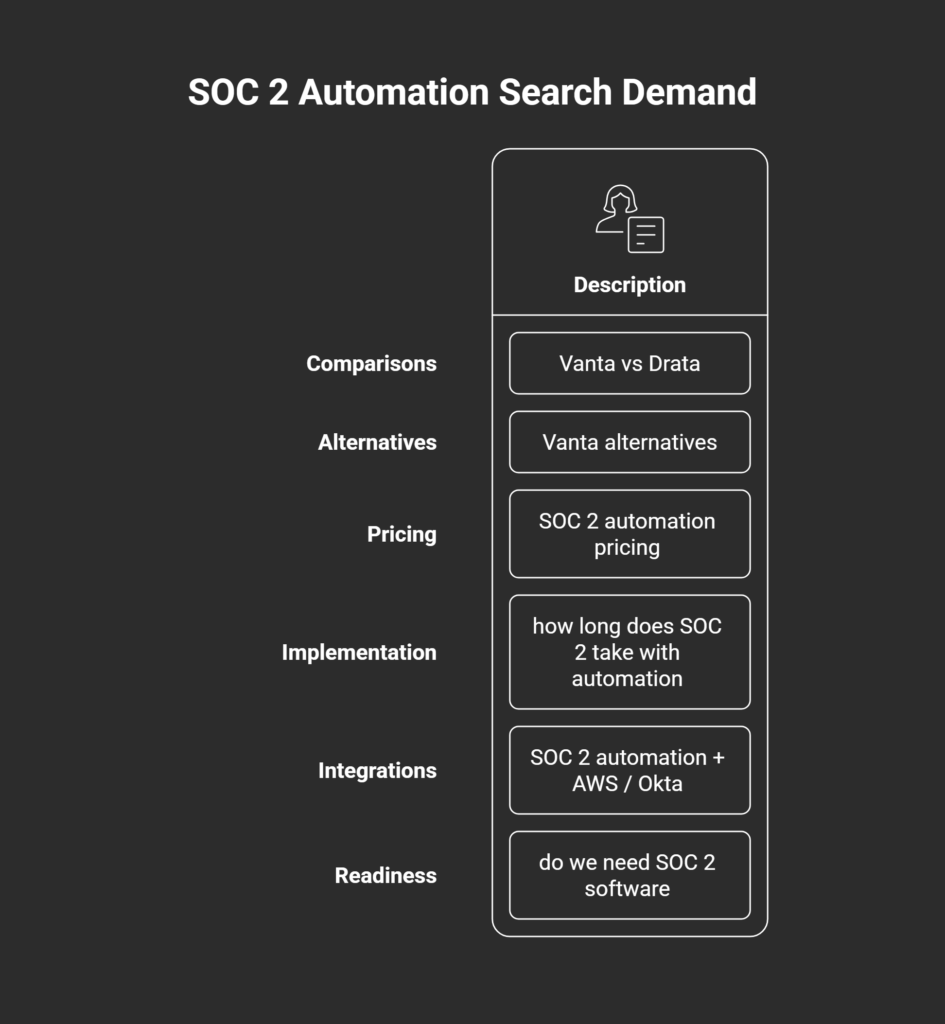

That shows up in searches like:

- Comparisons: “Vanta vs Drata”

- Alternatives: “Vanta alternatives”

- Pricing: “SOC 2 automation pricing”

- Implementation: “how long does SOC 2 take with automation”

- Integrations: “SOC 2 automation + AWS / Okta”

- Readiness: “do we need SOC 2 software”

This is where SOC 2 automation SEO earns its keep. The work is less about producing more pages and more about making sure the right pages exist when evaluation is already happening.

In this article, we’ll break down the demand types, the BOFU page formats that capture them, and how vendors compete in these search results without leaning on hype or generic SaaS SEO advice.

Key Takeaways

- High-intent SOC 2 searches reflect active evaluation, not early research.

- BOFU SEO works when pages reduce buyer uncertainty during shortlisting.

- Comparisons, alternatives, pricing, integrations, and implementation pages carry the most weight.

- Winning content emphasizes clarity, specificity, proof, and honest tradeoffs.

- Rankings matter less than helping buyers decide with confidence before sales enters the picture.

What “high-intent search demand” looks like in SOC 2 automation

In SOC 2 automation, “high-intent search demand” is easy to spot because it clusters around a single moment: evaluation. Someone has a real compliance deadline, a real buyer committee, and a real risk of choosing the wrong tool.

Most of that demand falls into four buckets:

- Evaluation searches

These are category-entry queries from buyers building a shortlist.

Examples: “SOC 2 automation software”, “SOC 2 compliance platform” - Comparison searches

These show up once a buyer has 2–3 vendors in mind and needs a fast way to separate them.

Examples: “Vanta vs Drata”, “Secureframe vs Vanta” - Alternatives searches

These are usually driven by a constraint: pricing, fit, implementation overhead, or internal requirements. Examples: “Vanta alternatives”, “Drata alternatives” - Objection / readiness searches

These come from buyers trying to justify the purchase internally or pressure-test whether software is even necessary. Examples: “Do we need SOC 2 software?”, “SOC 2 automation worth it for startups?”

None of these are curiosity keywords. They reflect active shortlist behavior, where the buyer is trying to reduce uncertainty before a demo gets booked.

The real competition isn’t rankings

In compliance software markets, search visibility alone rarely decides the outcome. Most buyers can find multiple vendors ranking for the same high-intent queries. What separates them is how quickly each page helps the buyer feel confident moving forward.

SOC 2 automation buyers tend to evaluate through a risk lens. They want to know whether a tool will fit their environment, meet auditor expectations, and avoid surprises during implementation. Feature lists matter, but only after those uncertainties are addressed.

At the high-intent stage, SEO becomes a trust contest. Pages that perform well consistently do four things:

- Clarity: they explain what the product does in concrete terms, without abstraction

- Specificity: they name who the tool is for and where it is not a fit

- Proof: they support claims with verifiable details and realistic scenarios

- Constraints and tradeoffs: they acknowledge limitations instead of hiding them

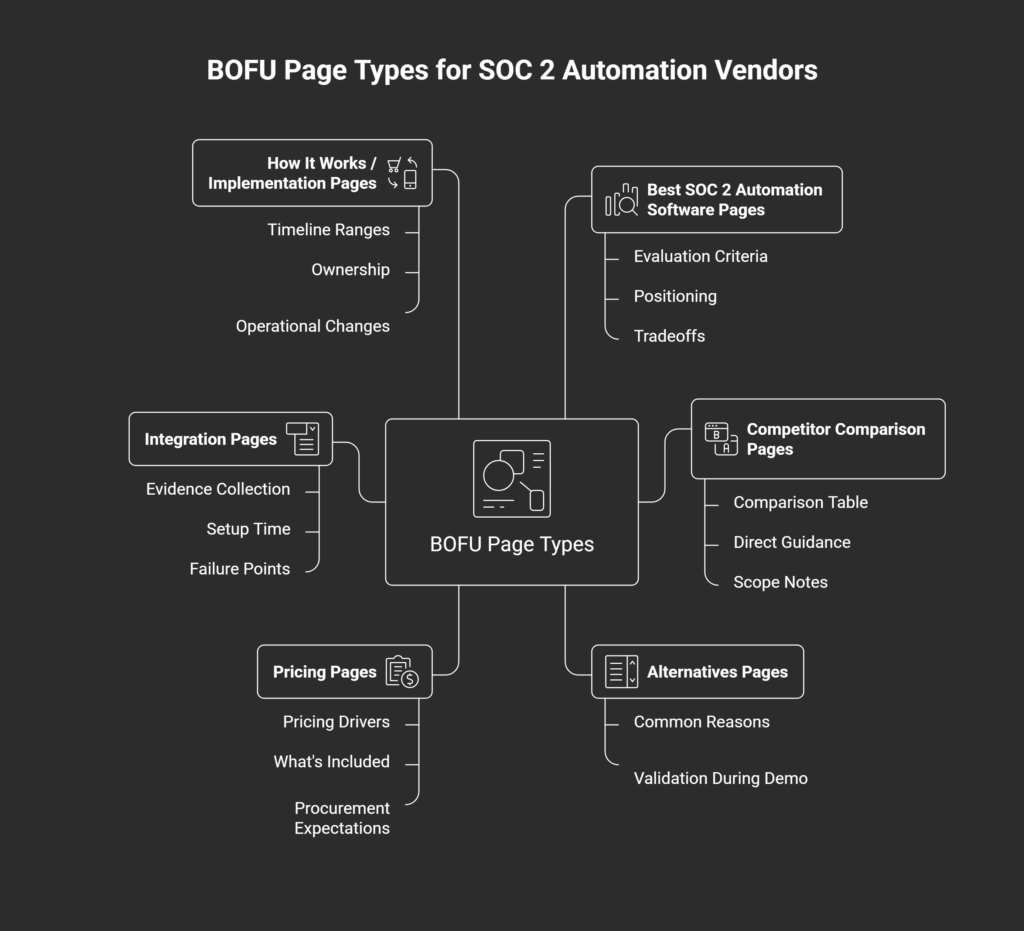

The 6 BOFU page types for SOC 2 automation vendors

Once a buyer reaches the shortlist stage, page format matters as much as messaging. In SOC 2 automation, a small set of BOFU page types consistently shape evaluation because they align with how buyers try to reduce risk.

Below are the six page types that tend to carry the most weight, along with what they need to do their job.

1. “Best SOC 2 automation software” pages

What this page is:

A category map for buyers entering the market or pressure-testing early assumptions.

What buyers want:

A clear view of how the market is structured and where each tool fits.

Must include:

- Evaluation criteria that reflect real buying concerns

- Clear positioning on who each tool is best for

- Honest tradeoffs between approaches or product philosophies

What it influences:

Early shortlist formation and internal alignment.

Common mistake:

Publishing a shallow listicle with no decision framework, which creates more confusion than clarity.

2. Competitor comparison pages

What this page is:

A disambiguation tool for buyers choosing between two known options.

What buyers want:

A fast, credible way to understand meaningful differences.

Must include:

- A comparison table that covers features and outcomes

- Direct guidance such as “choose X if / choose Y if”

- Scope notes that clarify what is and isn’t included

What it influences:

Final shortlist ordering and demo prioritization.

Common mistake:

Performing neutrality while avoiding specifics, which reads as evasive.

3. Alternatives pages

What this page is:

An escape hatch for buyers encountering a constraint with a known vendor.

What buyers want:

A small set of viable options that solve the same underlying problem.

Must include:

- Common reasons buyers look beyond the incumbent

- What to validate during a demo to avoid repeating the same issue

What it influences:

Shortlist replacement and late-stage vendor switches.

Common mistake:

Attacking competitors directly, which tends to erode trust in regulated markets.

4. Pricing pages

What this page is:

A budget and procurement alignment tool.

What buyers want answered:

“Is this in range for us?” and “What actually drives cost?”

Must include:

- Core pricing drivers (team size, integrations, frameworks, entities)

- What’s included versus add-on

- Clear procurement expectations, even if sales is required

What it influences:

Deal qualification and internal approval.

Common mistake:

Hiding all context behind a form.

5. Integration pages

What this page is:

An implementation confidence check.

What buyers want:

Certainty that evidence collection will work in their environment.

Must include:

- What evidence is collected and how

- Setup time and prerequisites

- Common failure points or limitations

What it influences:

Technical buy-in and rollout confidence.

Common mistake:

Thin pages that only state “we integrate with.”

6. “How it works / implementation” pages

What this page is:

A reality-setting document for post-purchase expectations.

What buyers want:

A realistic picture of adoption, not a simplified promise.

Must include:

- Timeline ranges rather than fixed dates

- Clear ownership between vendor and customer

- What changes operationally after purchase

What it influences:

Final go/no-go decisions and onboarding readiness.

Common mistake:

Reducing a complex process to an oversimplified checklist.

How vendors actually compete: the 5 levers that decide who wins

By the time buyers reach high-intent SOC 2 automation searches, most vendors look similar on the surface. Rankings matter, but they rarely decide the winner on their own. Outcomes are shaped by a small set of strategic choices that influence how quickly a buyer can decide with confidence.

Five levers tend to make the difference.

1) Page specificity to a buyer and scenario

Strong pages speak to a concrete situation rather than a broad market. That might mean drawing clear lines between:

- startups versus enterprise teams

- first-time SOC 2 versus renewal audits

- single-product companies versus multi-entity environments

Specificity signals fit and saves the buyer time.

2) Proof that doesn’t read like marketing

Credibility comes from precision, not polish. Effective pages combine:

- clear claims paired with explicit constraints

- customer examples used to illustrate context, not success metrics

- honest statements about what the product does not do

This lowers skepticism and sets realistic expectations.

3) Comparison clarity that supports fast scanning

Buyers often skim before they read. Pages that win reduce effort by using:

- structured tables and descriptive headings

- direct answers to common questions

- consistent terminology across sections

Clarity shortens the path from question to conclusion.

4) Internal linking that mirrors evaluation paths

High-intent readers move in predictable sequences. Common paths include:

- comparison → pricing → implementation → demo

- alternatives → how it works → security or trust documentation

Linking should guide, not distract, reinforcing momentum rather than forcing exploration.

5) Content maintenance as a quiet moat

SOC 2 platforms evolve quickly. Integration depth changes. Audit expectations shift. Pages that fall out of date lose trust without obvious signals. Regular updates preserve accuracy and keep evaluation content credible long after it first ranks.

Why most SOC 2 vendor content fails (even when it ranks)

Many SOC 2 automation pages rank because they match the right keywords, not because they help buyers decide. That gap shows up quickly once someone actually reads the page.

Buyers tend to notice the same failure patterns almost immediately:

- An “SEO content voice.” Buzzwords, abstract benefits, and vague promises signal that the page was written to perform, not to inform.

- An undefined ICP. Content that speaks to “any company pursuing SOC 2” rarely feels relevant to anyone in particular.

- No tradeoffs or constraints. When every feature sounds universally beneficial, credibility erodes. Real buyers expect limits.

- Feature dumping instead of decision support. Long lists replace explanations of when a capability matters and when it doesn’t.

- Pages built for Google, not evaluation. Headings, tables, and links exist to capture queries rather than guide a decision.

In high-trust markets, these issues don’t usually cause sharp drops in traffic. Buyers stop trusting what they’re reading. Conversion suffers even as rankings hold. Over time, that trust decay weakens demo quality, slows sales cycles, and undercuts the very demand the content was meant to capture.

A simple execution plan

A practical execution plan looks like this:

Step 1: Map your BOFU demand

Start by listing 20–40 high-intent queries across the four demand buckets discussed earlier: evaluation, comparison, alternatives, and objections. These should reflect real sales conversations and common pre-demo questions, not tool-generated volume.

Step 2: Choose six “money pages” first

Prioritize depth over coverage:

- two competitor comparison pages

- two alternatives pages

- one pricing or decision-clarity page

- one implementation or integrations page

This set usually covers the majority of late-stage search behavior.

Step 3: Build a minimum evaluation loop

Each page should clearly answer three questions:

- who this is for

- what changes if the buyer chooses this option

- what to validate during a demo

Pages should link to each other in ways that reflect how buyers move through evaluation.

Step 4: Update monthly to stay accurate

Revisit content to reflect product changes, integrations, and audit expectations. The goal isn’t freshness signals. It’s maintaining trust as the category evolves.

High-intent SEO is pre-demo positioning

By the time a buyer schedules a demo, most of the real evaluation has already happened. Opinions form earlier, shaped by the pages they read while narrowing options and pressure-testing fit. High-intent search is where that momentum starts.

If you want to see how these individual pages fit into a broader, repeatable approach, there’s a deeper guide that lays out the full system end-to-end. You can explore the full SOC 2 automation SEO playbook for a more complete view of how this works in practice.